This morning, OpenAI is implementing new conditions for their API developers to address feedback from developers and consumers as the ChatGPT and Whisper APIs are being released.

Beginning today, OpenAI is introducing a new initiative which establishes that submitted data via the API can no longer be utilized without the permission of the customer or organization who provided it. Additionally, data will only be retained for a period of thirty days, though stricter rules can be applied if needed. On top of that, the company has simplified its language regarding data ownership and clarified that customers have sole authority over any generated input or output derived from AI models.

Greg Brockman, the head of OpenAI, claims that some of the changes involved were not really changes at all; OpenAI API users have always held the rights to the input and output data, regardless of whether it is text, images, or anything else. However, due to the newly arising legal problems associated with generative AI and customer feedback, the terms of service had to be rewritten, he noted.

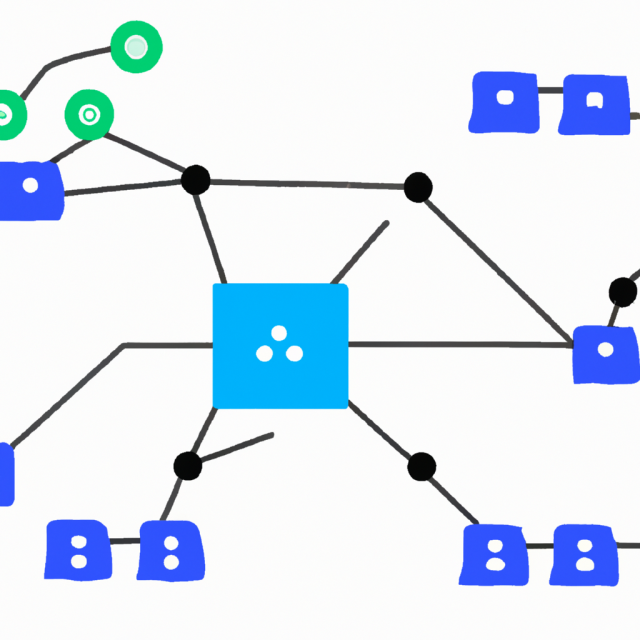

Brockman expressed to TechCrunch that their chief goal has been to make their platform extra user-friendly for developers. He continued to explain that their mission is to construct a platform so that other companies can be built on top of it.

Developers have had a lot of disagreement with OpenAI’s (now out of date) data handling procedure, saying that it presented a danger to individuals’ privacy and enabled the organization to use their information to make money. In one of its own support articles, OpenAI recommends users not to give sensitive information when engaging in conversations with ChatGPT as it “is unable to erase specific prompts from [users’ records].”

By offering customers the option to choose not to submit their data for training and alternative data retention possibilities, OpenAI is evidently intending to expand the attractiveness of its platform. Additionally, they are aiming to increase its size in a very significant manner.

OpenAI declared that they will replace the pre-release review process for developers with a substantially automated system. Through email, a representative said they are confident they can take this step as “the majority of applications were authorized through the assessment procedure” and their surveillance capacities have “significantly increased” since this time last year.

The spokesperson noted that the process has shifted from a method of vetting apps in advance, requiring developers to wait in line to get approved with an understanding of their concept, to one where suspicious apps are identified afterwards by analyzing the activity and investigating when needed.

A computerized system relieves the burden on OpenAI’s reviewers. It is potential to enable the firm to give the thumbs up to coders and applications for its APIs more quickly. OpenAI feels the urgency to deliver a return on Microsoft’s multi-billion dollar stake. Reports suggest that the firm’s expectation is to bring in $200 million in 2023, which is minuscule in comparison to the over $1 billion that has been invested in it.